International Journal of Robotic Engineering

(ISSN: 2631-5106)

Volume 5, Issue 2

Research Article

DOI: 10.35840/2631-5106/4127

Random Coin Recognition and Robotic Pick-and-Place

Ching-Long Shih* and Muhammad Qomaruz Zaman

Table of Content

Figures

Figure 1: The robotic system architecture includes....

The robotic system architecture includes an industrial 6-axis robot arm, linear gripper, coin gripping mechanism, and eye-in-hand camera.

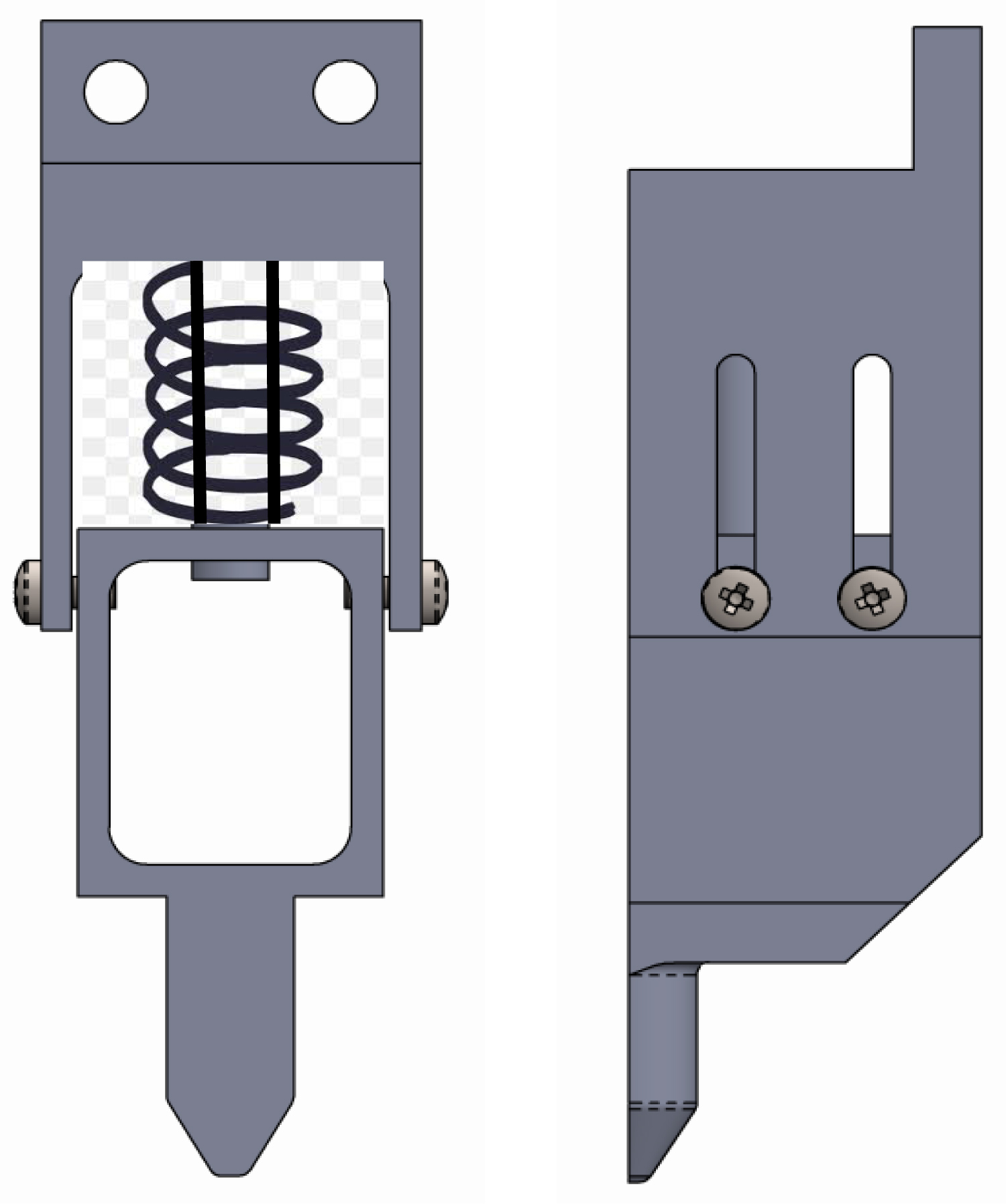

Figure 2: The coin gripping mechanism with an.....

The coin gripping mechanism with an inner buffer spring; The left is the front view and the right is the side view.

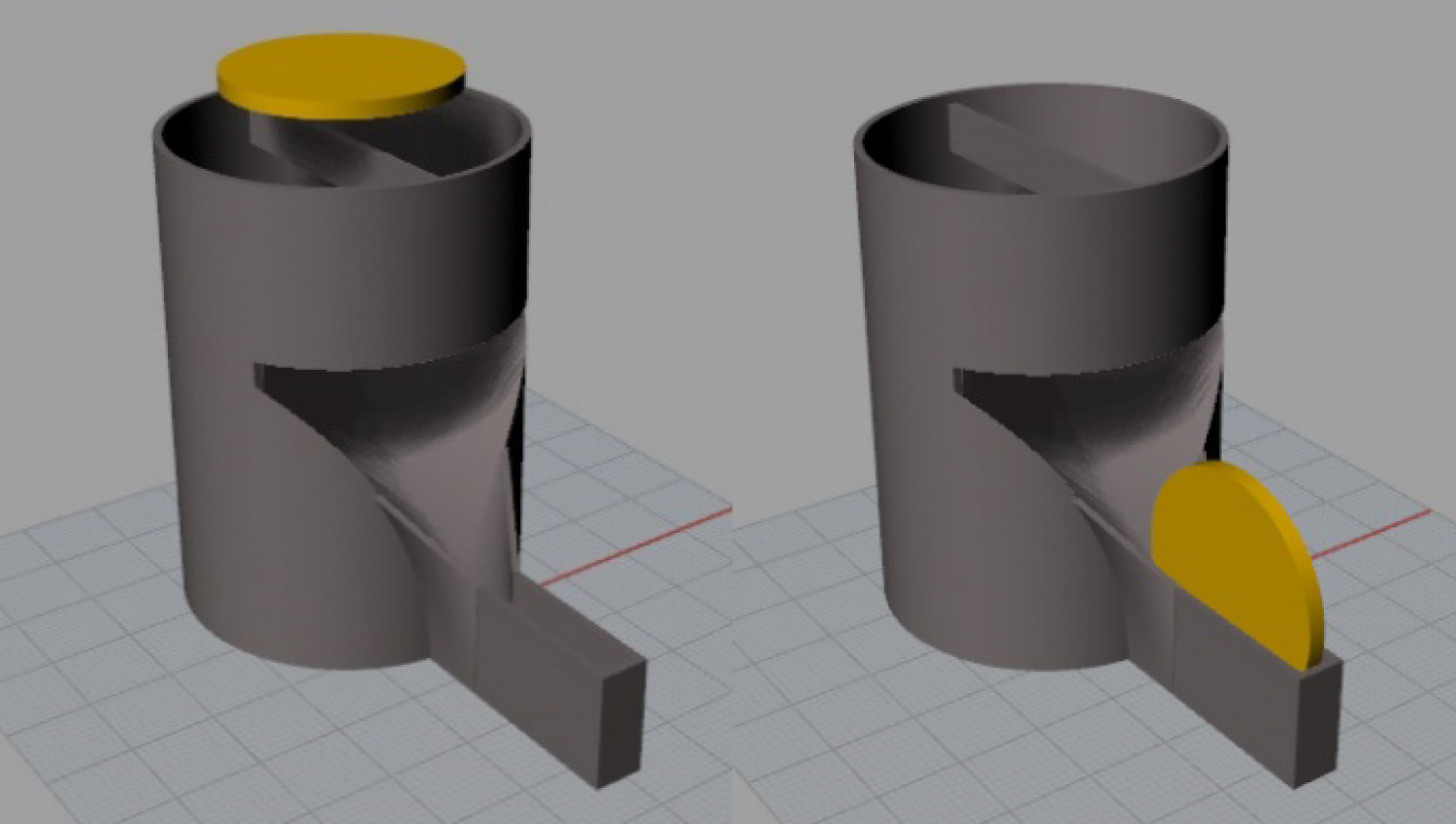

Figure 3: The coin flip mechanism, from the left...

The coin flip mechanism, from the left in horizontal to the right in vertical.

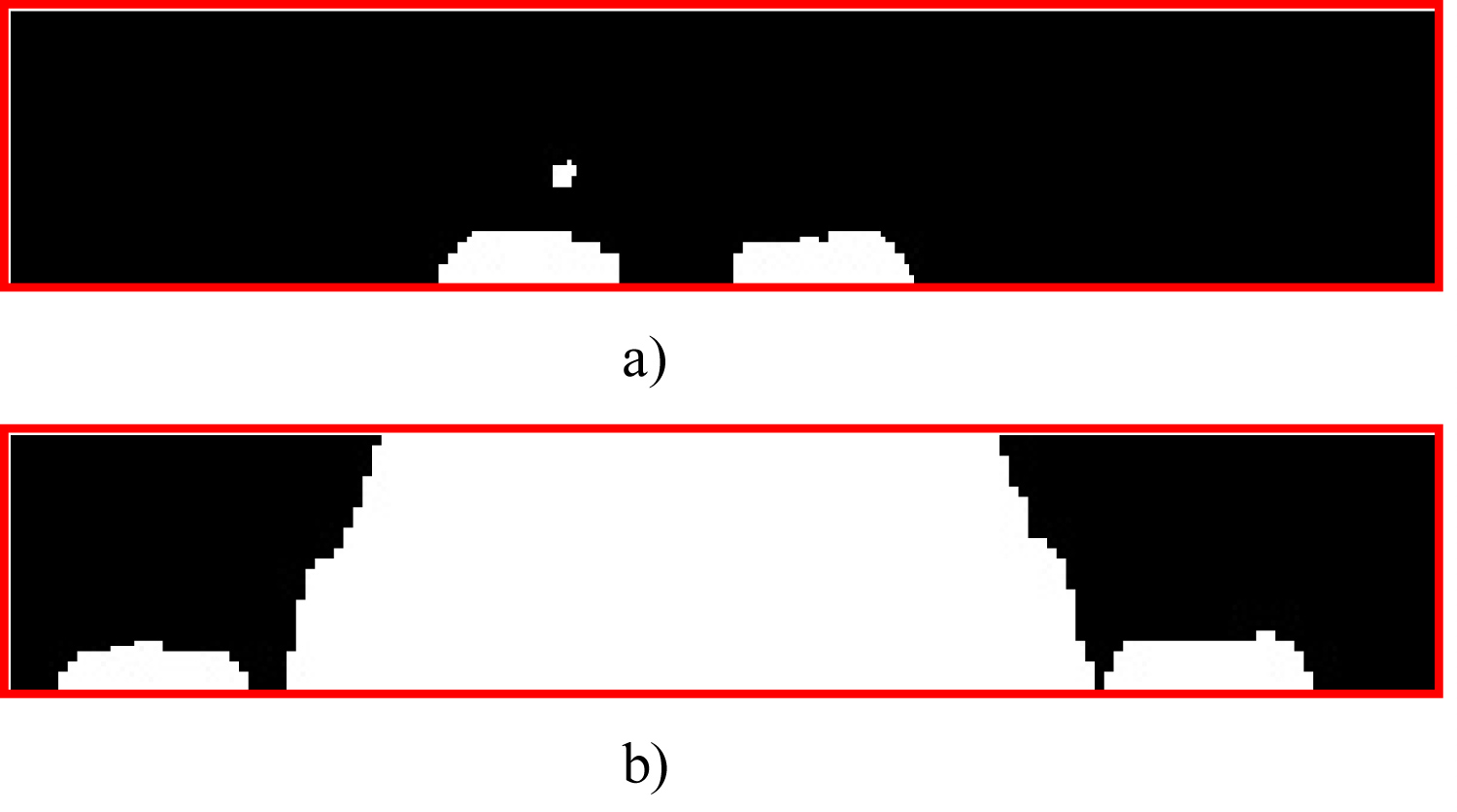

Figure 4: Examples of the connected area in the...

Examples of the connected area in the region of interest when the robot gripper is not holding a coin and is holding a coin: (a) Case of robot gripper not holding a coin; (b) Case of robot gripper holding a coin.

Figure 5: The control strategy of the robot picking...

The control strategy of the robot picking-and-placing a coin.

Figure 6: Possible case of independent and separated...

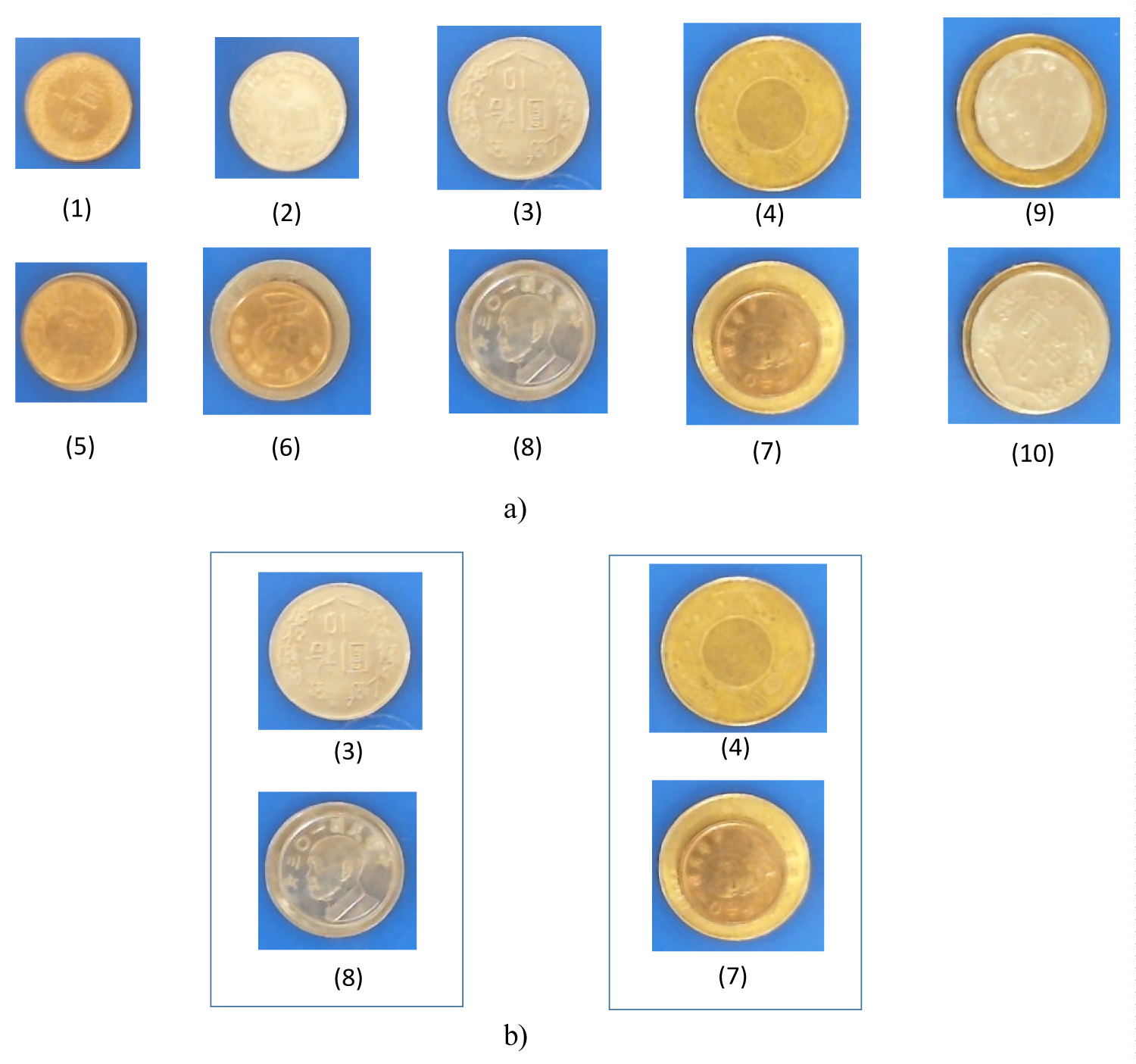

Possible case of independent and separated coins: (a) 10 possible cases of independent and separated coins; (b) Pair cases that are easily judged as the other case; Coins $10 and $5 + $10 and coins $50 and $50 + $1.

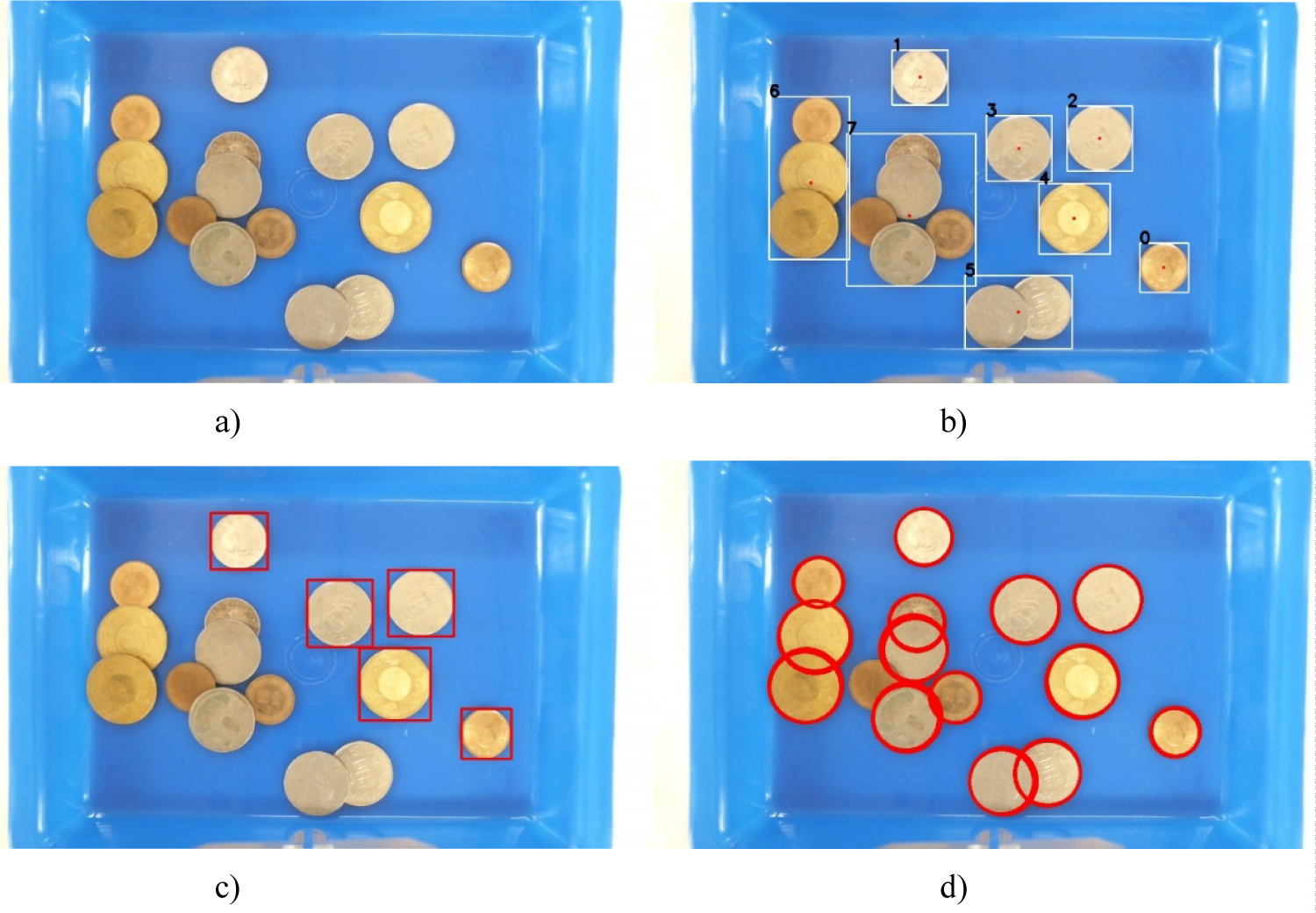

Figure 7: Coin image detection: (a) Original...

Coin image detection: (a) Original image; (b) Connected area; (c) Separated coins; (d) All detected coins.

Tables

Table 1: Reference values of coin radius.

Table 2: Success rate of classification for independent and separated coins.

References

- Rahardja K, Kosaka A (1996) Vision-based bin-picking: Recognition and localization of multiple complex objects using simple visual cues. IEEE/RSJ Inter Conf on Intelligent Robots and Systems, 1448-1457.

- Oh JK, Lee SH, Lee CH (2012) Stereo vision based automation for a bin-picking solution. International Journal of Control, Automation and Systems 10: 362-373.

- Liu MY, Tuzel O, Veeraraghavan A, Taguchi Y, Marks TK, et al. (2012) Fast object localization and pose estimation in heavy clutter for robotic bin picking. The International Journal of Robotics Research 31: 951-973.

- Pretto A, Tonello S, Menegatti E (2013) Flexible 3D localization of planar objects for industrial bin-picking with mono camera vision system. Inter Conf on Automation Science and Engineering, 168-175.

- Fukumi M, Omatu S, Takeda F, Kosaka T (1992) Rotation-invariant neural pattern recognition system with application to coin recognition. IEEE Transactions in Neural Networks 3: 272-279.

- Fukumi M, Omatu S (1993) Design a neural network for coin recognition by a genetic algorithm. IEEE, Proceedings of 1993 International Joint Conference on Neural Networks, 2109-2112.

- Haber R, Ramoser H, Mayer K, Penz H, Rubik M (2005) Classification of coins using an Eigen space approach. Pattern Recognition Letters 26: 61-75.

- Nölle M, Penz H, Rubik M, Mayer K, Holländer I, et al. (2003) Dagobert-A new coin recognition and sorting system. In: Sun C, Talbot H, Ourselin S, Adriaansen T, Digital image computing - techniques and applications. (7th edn), Sydney, Australia.

- Reisert M, Ronneberger O, Burkhardt H (2007) A fast and reliable coin recognition system. In: Hamprecht FA, Schnörr C, Jähne B, Pattern Recognition, DAGM, Springer, Germany.

- Kim S, Lee SH, Ro YM (2015) Image-based coin recognition using rotation-invariant region binary patterns based on gradient magnitudes. Journal of Visual Communication and Image Representation 32: 217-223.

Author Details

Ching-Long Shih* and Muhammad Qomaruz Zaman

Department of Electrical Engineering, National Taiwan University of Science and Technology, Taiwan

Corresponding author

Ching-Long Shih, Department of Electrical Engineering, National Taiwan University of Science and Technology, Taipei, Taiwan.

Accepted: September 28, 2020 | Published Online: September 30, 2020

Citation: Ching-Long S, Zaman MQ (2020) Random Coin Recognition and Robotic Pick-and-Place. Int J Robot Eng 5:027.

Copyright: © 2020 Ching-Long S, et al. This is an open-access article distributed under the terms of the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original author and source are credited.

Abstract

Bin-picking of random parts is an important application problem in robotic automation systems, especially coins which act as two-dimensional objects used in daily commercial routines. This paper designs a robotic eye-in-hand visual feedback control system to detect and classify randomly distributed coins, and pick-and-place coins to the target locations. The specific experimental tasks are pick-and-insert a coin in the coin-plate into an individual moneybox, and pick-and-stack an individual coin from the bottom up to like a cylinder.

Keywords

Coin recognition, Eye-in-hand, Visual feedback, Pick-and-place

Introduction

The robotic automation system of identification and the picking of randomly stacked components in a parts box cover important areas of applications [1-4]. If a certain goal is achieved, then the scope and dexterity of the robotic arm applications and enhanced and the reduction of working space cost can take place. The two main research topics to achieve this ideal goal are detecting image classification techniques for arbitrarily stacked parts and designing an eye-in-hand visual feedback controller. The mechanical design of the end-effector gripper is also an important key factor.

The developed algorithm for coin detection and classification can be applied in industrial lines where the robot needs to sort any thin cylindrical objects. Such things can be found in particular industries such as medicine or cosmetic packaging. It is also useful in automotive assembly automation where there are many circular objects such as bearings, flat washers, or gears. It can also be used to sort objects that appear circular on the image produced by the camera, such as fruits or vegetables, in which the quality can be determined by their colors and sizes.

Rahardja and Kosaka applied a binocular camera system to detect object images of any stacked components [1]. They used simple feature circle points as the judgment features of the parts. Although there are good image recognition results, there is no experimental result by that work. Oh, Lee, and Lee also applied an eye-in-hand binocular camera system to perform image recognition and for the picking of any stacked components [2]. They also used the simple geometric features of the circular hole points as the judgment features of the parts, as well as the robot arm picking experiment results, but only a single mechanical component is identified. Liu and Tuzel completed a practical identification system and the picking of any stacked industrial 3D parts in a parts box [3]. This work was characterized by a multi-LED flash camera and a unique fast 3D part shape matching method in addition to the eye-in-hand camera. Pretto, Tonello, and Menegaatti establishes a robotic arm eye-in-hand or eye-to-hand system for any stacking of parts in a box that can be viewed as a 2D image recognition and robotic arm gripping of 2D parts such as coins [4]. They employed a single high-resolution black-and-white camera and LED lights for part image recognition and the capture of hundreds of 2D parts stacked in a box.

A coin in essence is a 2D object that is also a trading medium that can be seen everywhere. The problem for coin recognition and classification is the large number of applications for commercial transactions between vending machines and coin counting machines. Most of these systems use electronic circuit hardware to count the number and currency value of coins one by one. In the past, image processing technology for coin detection classification was mostly based on the judgment of a single coin. Image recognition methods for individual coins can be classified into neural networks [5,6], Eigen-image and principal component analysis (PCA) [7], edge contour or shape matching method [8-10], and so on.

The coin image recognition method of the neural network first converts the coin image into a feature vector matrix of 20 × 20 (or 32 × 32) by taking the average grayscale value in a rectangular coordinate system or polar coordinate system. Fukumi, et al. identified only four categories of positive and negative sides of the two coins, and the recognition success rate is about 90%, 99%, and 96%, respectively [5,6]. The eigen image and principal component analysis methods commonly found in face recognition systems are also applicable to coin identification systems. Thousands of different coins are characterized by image grayscale depth, gradient and edge depth in the image coordinate system, and the success rate of coin identification using principal component analysis is distributed at 92%, 95% and 98% [7]. Nolle, et al. identified coins for thousands of different ones, first performing coin detection, and then using the fast Fourier transform to calculate the similarity for coin verification in the image coordinate system [8]. Its recognition success rate is as high as 99%. Reistert, Ronneberger, and Burkhardt employed the gradient direction data of the image polar coordinates as the coin features, and then the nearest neighbor method was used to identify the most similar coins in the database [9]. The same thousand coins are identified, and the recognition success rate is up to 99%. Kim, Lee, and Ro proposed a new coin image feature representation called RFR-GM (Rotating Turned Circular Binary Graph and Gradient Magnitude) [10]. Although their recognition success rate is 90%, this feature representation only takes 23 vector elements, which are much less than that of the other methods described above.

Although previous research presents good methods and results for the image recognition problem of a single coin, there are few reliable detection methods for the identification of connected and overlapping coins. There are few works utilizing a robotic arm for coin picking and placing. In order to identify and randomly pick up stacked parts in a parts box, this study designs a robotic arm coin gripping tool, an eye-in-hand image processing system, and a visual feedback control system. We are able to detect a plurality of randomly distributed coins and pick-and-place them to a specified target position. The specific design goals in this work includes (1) Placing the coins from the coin tray into an individual moneybox and (2) Stacking the same coins from the coin tray from bottom to top like a cylinder.

System Description

The system architecture of this work, as shown in Figure 1, is an industrial 6-axis robot arm with an eye-in-hand camera system and a blue coin tray with randomly placed coins. The end-effector is equipped with an electric linear motion gripper with a travel range of 0 mm to 32 mm. We design a coin gripping mechanism at the end and add a buffer spring to the inner movable space to overcome the error in the height of the end-effector, as shown in Figure 2. In addition, we also design a coin flip assister, as shown in Figure 3, to convert the coin from a horizontal angle to a vertical angle to facilitate the next step of placing the coin into the moneybox.

This paper proposes a reliable detection and classification method for the identification of multiple connected and overlapping coins. The image recognition approach for detecting and sorting randomly stacked coins is a combination of connected cell and hough circle detection methods. The connected area method can quickly detect the isolated coins, while the hough circle method can detect multiple connected and overlapping coins. The coins of $50 and $1 are biased being made of copper, while the coins of $10 or $5 are biased toward being silver. Thus whether it is a separate coin or multiple overlapping and connected coins, in addition to detecting the circular radius of the coin it is also necessary to consider the color difference of different coins in order to detect the coins at the top. After the target coin is selected, the coin gripping angle is also an important factor in whether the coin can be successfully gripped. Finally, it is also necessary to have a mechanism to judge whether a coin is holding to avoid performing unnecessary actions.

The robot moves the eye-in-hand camera directly above the coin tray and maintains a parallel position to the tray, so that the visual feedback control system is only considered in the two-dimensional plane. The visual feedback control action strategy of the eye-in-hand system for picking and placing coins is divided into three steps: Selecting a specific target coin, close-loop control the specific target, and open-loop control the gripping or placing of the coin. The priority of coin gripping is to randomly pick up the independently separated coins and then an overlapping and a connected coin.

Coin Image Processing

Coin detection and classification

The image processing strategy of coin detection is to combine the advantages of both the connected area method and the hough circle detection to realize a simple and fast method for coin detection and visual feedback. First, the independently separated coins are detected by the connected area method, and then the Hough circle detection is performed one by one in the region of interest (ROI) in which the areas are larger than the single coin.

The detailed steps of coin detection are explained below.

Step 1: Connected area method

After removing the background of the blue coin tray, one can get the foreground image of coins. The image pixel's HSV color space value between (100, 20, 0) and (130, 255, 255) is classified as background; Otherwise, it is the coin itself. According to the area, radius, and length axis of the bounding rectangle of the connected area, the independently separated coins can then be detected. If the area of the other connected area is larger than a single coin, and the coins are connected and overlapped, then continue Step 2 detection of the round circle.

Step 2: Hough circle detection

In the region of interest obtained in Step 1, the edge contours of the coin image are respectively read for Hough circle detection to find all the circles that match the radius of the coins.

Step 3: Sort by distance

The circle detected in Steps 1 and Step 2 is sorted according to the distance between the center of the circle and the center of the image from small to large.

This step is one of the requirements for visual feedback control.

Step 4: Classification of coins

The classification of the coin is based on the image radius reference value as listed in Table 1. The ratio of the coin's image radius R to the coin's actual radius r is equal to the ratio of the camera internal parameter to the camera height Z above the coin, i.e. .

Note: , mm, .

Detection of coins in the upper layer

When the robot is picking up a coin, the upper coin in the coin tray is preferentially selected. Therefore, detecting the upper coin is an important step. The detection of the upper coin requires a consideration of the following two cases: Whether there are multiple coins overlapping when the coins are separated, and whether the coins are covered when multiple coins are connected and overlapped.

Case 1: Separated overlapping coins

After taking the Canny edge detection of the separated coins, the pixels belonging to the radius of each coin image are counted separately and then divided by the image radius of each coin. The top coin is the one with a value exceeding the high threshold, and the one with an image radius is the smallest.

Case 2: Connected and overlapped coins

A partial small area histogram of the B-channel in the BGR color space is calculated by reading the circular image area occupied by each of the detected coin patterns. The reason for using the histogram of the B-channel image instead of the histogram of the conventional gray-scale image is that the former has color information and the latter has no color information. When the coin is not covered, its histogram distribution is concentrated near the average value; otherwise, when the coin is covered, its histogram distribution is more dispersed and not concentrated. Therefore, by calculating the standard deviation of the histogram and setting the standard deviation threshold, we can judge that the coin is in the upper layer.

Coin gripping angle

Case 1: Separated coins

When the robot selects a coin to be gripped, the coin is referred to as the target, and the coins near the target coin and the peripheral wall of the coin tray are defined as obstacles. When the robot arm grips the target, the end-effector needs to be rotated to an appropriate angle to avoid the above obstacles. First, when the gripper is opened, the obstacle is obtained by rotating the radius of the gripper with the center of the target coin as the center point. The obstacle is scanned in a clockwise, fixed angle increment until the obstacle point is not encountered, which is the angle of rotation required to grip the coin.

Case 2: Connected and overlapped coins

In the case of the connected and overlapping coins, only the coins belonging to the upper layer become obstacles. Thus, the same angle of the coins of Case 1 can be used to calculate the rotation angle for the upper target coin.

Coin holding detection

The judgment of whether the robot gripper is successfully gripping the coin is also an important task. This function can avoid unnecessary movements or be the basis for whether or not to pick up coins again. The eye-in-hand camera is mounted in a location to cover the holding coin below the camera image. It determines whether the area of the largest domain connected area in the region of interest exceeds the area threshold value so as to correctly determine whether the gripper is holding a coin successfully. Figure 4 is a comparative example of the connected area in the region of interest when the robot gripper is not holding a coin and is holding a coin.

Visual feedback control

The basic control actions of the robotic visual feedback control are X-axis movement, Y-axis movement, Z-axis rotation, and Z-axis movement. Only the amount of relative movement of the camera to the X-axis and the Y-axis requires the design of a visual feedback controller. The control of the Z-axis rotation and that of translation are open-loop. The control program first randomly picks up the independently separated coins and then randomly picks up connected and overlapping coins.

The visual feedback control strategy of the eye-in-hand system to pick-and-place coins is divided into the following three steps.

Step 1: Select a specific target within the visible range of the camera

The specific target items in the coin detection, classification, and picking-and-placing include coins, rectangular blue coin trays, cylindrical coin flipping aids, and rectangle holes of the moneybox.

Step 2: Closed loop control for the specific target

The eye-in-hand camera is continuously moved until the specific target center point locates at the center point of its image. The end-effector movement and run according to the close-loop feedback proportional controller, and , where and are the respective difference between the target center point and the image center point, and the proportional gain .

Step 3: Open-loop control to grip or place coins

After the eye-in-hand camera is moved to the top of the target in Step 2, the robot then rotates and moves the gripper to the appropriate angle and height to grip or place the coin.

The flowchart of the visual feedback control system for the robot picking-and-placing a coin is shown in Figure 5.

Experiments and Results

In the first experiment, the case of independent and separated coins is tested. The independent and separated coins can be divided into 10 possible combinations, as shown in Figure 6a. There are 2 pairs, as shown in Figure 6b, which are easily judged as the other case. Coins $10 and $5 + $10, and coins $50 and $50 + $1 are easily judged as the other case, because the outer radius of the coin is the same and the internal colors are similar. The results of the independently separated coin detection experiments are shown in Table 2. The average success rate of coin separation for independent and separated coins is 90.5%.

In the second experiment, the case of connected and overlapped coins is tested. Figure 7 shows the image processing of the first step of coin detection, whereby it is possible to distinguish between independently separated coins and connected and overlapping coins. Figure 8 shows a comparison of the B-channel histogram method in which the coins are not covered and are covered. The success rate for judgment of the upper layer is about 90%.

Three demonstration videos of the experimental results of robotic gripping and placing coins are recorded respectively:

(1) Identifying and gripping separated coins that are separated and overlapped; https://youtu.be/1E3nAmdr8gU?t=5s

(2) Classifying and placing the coins from the coin tray into an individual moneybox; https://youtu.be/1E3nAmdr8gU?t=2m57s

(3) Stacking coins in the coin tray into a cylindrical shape for each coin size. https://youtu.be/1E3nAmdr8gU?t=7m50s

Overall, the robotic coin detection and pick-and place system developed in this work has a success rate of about 90%.

In the next step, we shall consider classification of overlapped coins, such as when there exists a smaller coin that is completely on top of a larger coin. This problem is important in the recognition problem for randomly distributed coins, in which there may be completely or partially overlapped coins. Moreover, the success rate of 98% or higher is taken into consideration as the goal condition. This is because the coin recognition and classification problem is very necessary as automatic business machines are widely used in every daily life.

First, a neural network based classifier is planned to recognize a single coin or overlapped coins, in which a smaller coin may completely be placed on top of a larger coin. Each coin image is characterized by its color histogram vector with a size of 768. The histogram data are considered to be much smaller in size than the raw image data; moreover, such observations are both translation-invariant and rotation-invariant. PCA (Principle Component Analysis) based dimensionality reduction can then be performed on histogram data from the coin images training set. Supervised machine learning is then applied to tune neural network weighting matrices by using full-batched conjugate gradient minimization. Second, the problem of randomly distributed and overlapping coin recognition is also carried out by using deep machine learning based state-of-art object detection methods, such as Yolo v4 and Mask RCNN.

Conclusion

This paper has designed a 6-axis robotic eye-in-hand vision feedback control system that can detect and classify multiple randomly placed coins and can pick-and-place them to the specified target position. The characteristics of this paper are as follows.

(1) The coin holder is equipped with a buffer spring to thereby clamp onto the multi-Layer coin and overcome the height error of the end-effector.

(2) Information such as the connected area of the coin, the circular outline and the color characteristics provides a reliable method for the detection and judgment of multiple connected and overlapping coins.

(3) As much as possible, the detection of coins in a small area of interest and the coin image servo control of the gripping target can improve the accuracy of coin recognition and the working speed of the robot arm.

(4) The calculation of the coin gripping angle helps incorrectly picking a coin.

(5) Whether or not there is a coin-throwing judgment function can avoid unnecessary movements.

The success rate of coin identification and picking a coin is presently about 90%, but has not yet reached the goal of 98% or more. The next step is to apply artificial intelligence and machine learning technology to get better identification results.

Acknowledgements

This work is supported by grants from Taiwan's Ministry of Science and Technology, MOST 108-2637-E-011-001 and MOST 109-2637-E-011-003.